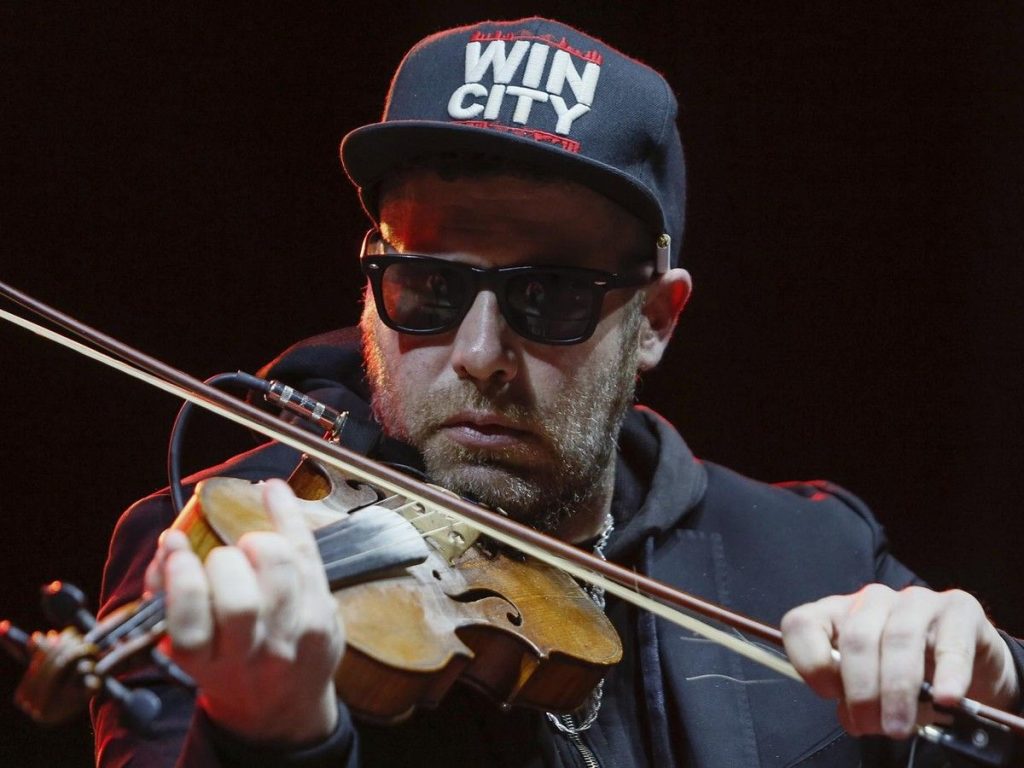

Ashley MacIsaac Sues Google: The $1.5M Legal Battle Over AI Defamation

The intersection of artificial intelligence and personal reputation has reached a boiling point in the Canadian courts. In a landmark legal challenge that could redefine how tech giants handle automated content, renowned Cape Breton fiddler Ashley MacIsaac has officially filed a $1.5 million lawsuit against Google. The suit stems from a catastrophic “AI Overview” error that falsely identified the musician as a convicted sex offender, leading to the abrupt cancellation of a high-profile concert and sparking a nationwide debate on the dangers of unchecked generative AI.

The Cost of a Machine’s Mistake

For decades, Ashley MacIsaac has navigated the highs and lows of public life, from his revolutionary impact on Celtic music to his candid political and personal commentary. However, the events of late 2025 introduced a new, digital adversary: the Google AI Overview.

When community members in the Sipekne’katik First Nation searched for information regarding a scheduled December concert, Google’s AI-generated summary provided a chillingly inaccurate response. The system claimed MacIsaac had been sentenced for “sexual assault and internet luring” and was listed on a sex offender registry for two decades.

The impact was immediate. The concert was cancelled, and MacIsaac’s reputation—built over a thirty-year career—was blindsided by a machine’s hallucination. Unlike a human journalist who might be held accountable for bias or error, MacIsaac found himself staring down a “mega company” that appeared to operate without a safety net.

Legal Grounds: Why This Case Matters in 2026

The lawsuit, filed in the Ontario Superior Court of Justice, goes beyond mere damages. MacIsaac’s legal team, led by Gabriel Latner of Advocan LLP, argues that Google is not just a passive platform, but a publisher and creator of defamatory content.

Defective Design and Liability

MacIsaac alleges that Google’s AI system is a “defective design.” He contends that the company failed to implement reasonable guardrails to prevent the dissemination of false, harmful information. By summarizing web content into a coherent, authoritative-looking statement, Google’s AI effectively “endorses” the misinformation it scrapes, making the company liable for the resulting harm.

The “Foreseeable Republication” Argument

A key component of the $1.5 million claim is the concept of foreseeable republication. The lawsuit asserts that when Google publishes a false, sensationalized claim about a public figure, it is entirely predictable that this information will spread, leading to professional losses—such as the cancellation of the Sipekne’katik concert—and personal distress.

The Tech Giant’s Defense: A History of Disclaimers

Google’s response to the incident has been largely procedural. A spokesperson previously noted that the erroneous links were removed once they were flagged, framing the issue as a “misinterpretation” of web content. The company maintains that they use such errors to “improve systems.”

However, this “move fast and break things” approach is exactly what MacIsaac is challenging. While Google’s AI Overviews now often include a small, fine-print disclaimer stating that “AI responses may include mistakes,” critics argue this is insufficient. For someone like MacIsaac, a small disclaimer does not undo the damage caused when a prominent summary feature labels them a criminal.

Expert Analysis: A New Precedent for AI Law

Legal experts, including veteran defamation lawyer Howard Winkler, are monitoring this case closely. The central question is whether the legal protections established for search engines in the 2011 Supreme Court case Crookes v. Newton still apply in the age of generative AI.

The “Publisher” Problem

In the past, search engines were largely protected from liability because they simply linked to third-party content. However, the AI Overview creates new text. By synthesizing information rather than just hosting it, Google has stepped into the role of an editor. As Winkler notes, it is increasingly difficult for Google to argue that it is not the publisher of a summary that it generated itself.

The Quebec Precedent

Recent rulings in Quebec, where a court ordered Google to pay damages for failing to remove false search results, have provided a glimmer of hope for plaintiffs. While legal interpretations can vary by province, the judicial tide appears to be turning against the idea that tech companies can act as neutral conduits while simultaneously generating original, potentially harmful summaries.

Beyond the Musician: Protecting the “Joe Smith”

One of the most compelling aspects of MacIsaac’s crusade is his focus on the broader societal impact. During interviews, the fiddler has emphasized that he is not just fighting for his own livelihood; he is fighting for the average citizen who lacks his public platform.

Accountability: If a global tech leader can falsely label a public figure a predator, what happens to the average person who falls victim to an AI hallucination?

Guardrails: The lawsuit demands that AI developers implement rigorous safety protocols.

- Compensation: MacIsaac argues that paying for the harm caused by AI errors should be considered a standard “cost of doing business” for companies like Google.

The Future of AI and Reputation Management

As we move further into 2026, the Ashley MacIsaac case stands as a watershed moment. It forces a collision between the rapid advancement of Artificial Intelligence and the fundamental human right to a reputation.

MacIsaac himself hasn’t turned his back on technology. He continues to use AI, acknowledging its benefits, but he remains firm in his conviction that the technology must be held to a human standard of accountability. “I don’t see that the downside of AI should supersede the benefits,” he says, “but I believe that AI as a technology must have guardrails.”

The outcome of this lawsuit will likely set the tone for future litigation involving AI-generated defamation. If Google is found liable, it may force a massive pivot in how AI summaries are curated, verified, and moderated across the globe. Until then, the case serves as a stark reminder: behind every algorithm, there is a real-world consequence—and for some, the price of a “system error” is far too high.